ChatGPT Data Security & GenAI Data Security for Enterprises

Prevent sensitive data (PII, PHI, IP) from leaking into ChatGPT, Microsoft Copilot, and other LLMs. Secuvy delivers AI data security and AI data governance using a self-learning, agentless control layer built for modern enterprises.

Why ChatGPT Enterprise Still Creates Data Leakage Risk

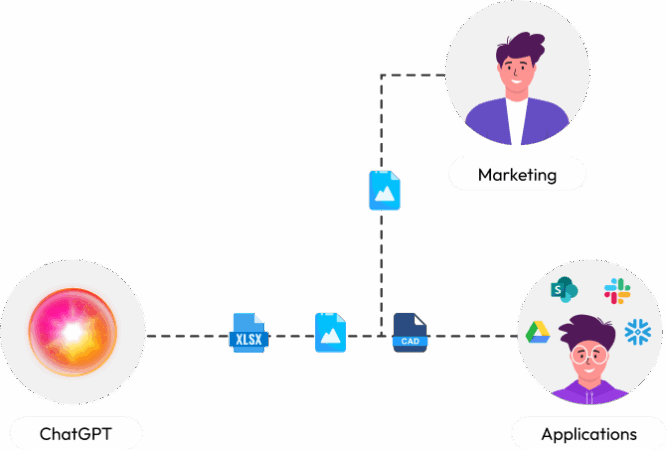

Even with enterprise plans, ChatGPT introduces new data exposure risks that traditional security tools fail to address:

Employees paste confidential data directly into AI prompts

Native controls rely on manual labels and static rules

Browser extensions slow users and break workflows

API and developer usage creates massive blind spots

Security teams lack audit-ready visibility into AI usage

This is not a future risk. It’s happening right now across US enterprises.

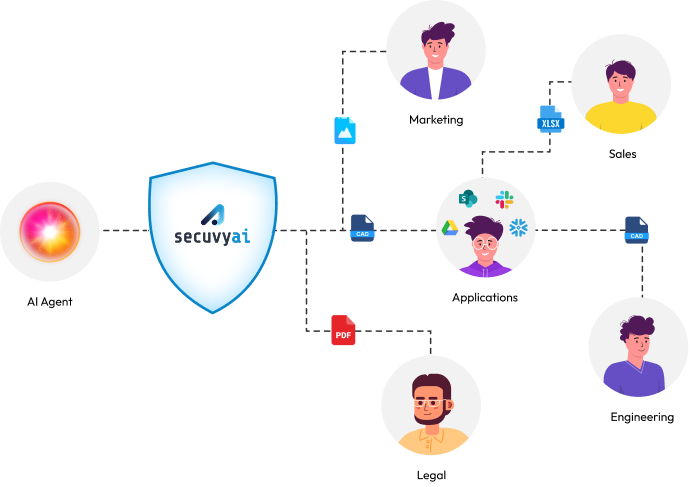

Real-Time AI Data Security & AI Data Governance Powered by Context

Secuvy uses a Model Context Protocol (MCP) based architecture to sit invisibly between your data and LLMs. Sensitive inputs are detected, classified, and sanitized in real time before they ever reach ChatGPT or other AI systems.

Auto-Detect and Classify Sensitive Data Before It Reaches ChatGPT

Secuvy’s self-learning engine understands your data context, not just patterns. It accurately classifies:

Intellectual property and source code

Financial models and forecasts

Contracts, legal documents, and M&A data

CUI, ITAR, PHI, and regulated datasets

Internal strategy and board materials

No regex. No rule writing. No manual tuning.

Enforce AI Policies with Mask, Block, or Allow Controls

Based on sensitivity and risk, Secuvy can:

Mask sensitive fields automatically

Block high-risk prompts and files before submission

Allow safe AI usage with full visibility

Policies apply consistently across ChatGPT Enterprise, Copilot, APIs, and any LLM environment.

Built for LLM Data Security Across Any Model

Secuvy secures:

Web-based AI usage

API-driven AI workflows

Retrieval-augmented generation (RAG) systems

This is LLM data security, beyond the limits of pattern-based AI Guardrails.

Why CISOs Replace Legacy Tools with Secuvy

Legacy DSPM tools (like Varonis or BigID) were built to scan static databases, not moving AI prompts. See why modern enterprises need a dedicated AI Data Security layer.

Feature

Secuvy (AI Firewall)

Legacy DSPM / Browser DLP

Primary Goal

(Stops the leak before it happens)

(Alerts you days after the leak)

Real-Time Interception

Post-Event Scanning

Deployment

(Heavy Proxies & Agents)

5 Minute Agentless (MCP)

2-4 Month Deployment

Detection Tech

(High False Positives)

Unsupervised Context AI

RegEx & Keywords

Coverage

(Browser Only or M365 Only)

All GenAI Apps (Web + API)

Siloed

Maintenance

Zero Manual Tuning

Constant Rule Writing

Built for AI Data Governance and Compliance

Secuvy helps enterprises maintain continuous compliance while adopting GenAI:

HIPAA and healthcare data protection

CMMC and NIST alignment for defense environments

Financial and privacy regulations

Emerging AI governance frameworks

Security, privacy, and audit teams get continuous, audit-ready evidence of how AI systems interact with sensitive data.

High-Impact Use Cases

Enable GenAI Data Security Without Blocking Innovation

Engineering

Prevent source code and API keys from leaking into public models

Legal & M&A

Analyze contracts safely in ChatGPT Enterprise

Healthcare

Automatically redact PHI before AI processing

Compliance

Map AI usage directly to NIST AI RMF controls

FAQ: Frequently Answered Questions

01How does the free trial work?

Join the early access waitlist, get approved, and instantly access the AutoClassification Agent. Upload a small test corpus (contracts, board decks, policies, support transcripts, etc.). In minutes, see Secuvy classify your unstructured data and run actions like detecting sensitive info, classifying sensitivity, analyzing leak impact, recommending access, previewing policies, and suggesting optimizations.

Test simple policies, e.g., “Block ‘Board-Restricted’ from AI” or “Warn for ‘Confidential – Internal’ but log it.”

It’s a fast POC: no regex building or big projects—just upload and see real results on your docs. Ready to scale? Apply the same logic via the Secuvy MCP Server.

02 What LLM surfaces can Secuvy protect?

Define policies once; the MCP Server enforces them across Microsoft Copilot (Word, Excel, Teams, etc.), enterprise ChatGPT, internal LLM/RAG apps, and backend LLM API workflows.

Examples: Block board/M&A docs from external LLMs; allow wiki snippets but strip customer names; route CUI/ITAR only to approved internal LLMs.

03What types of data can Secuvy detect and classify?

All the easy stuff. Also the hard stuff. The really hard stuff.

Complex unstructured content: IP/product docs, legal deals (NDAs, MSAs), executive/financial materials, customer support logs, regulated data (CUI/ITAR, clinical trials).

04Do you see or train on my data?

No. Classification runs inside your tenant. We never train global models on your data. You control logs, retention, and deletion—data removed when you uninstall.

05How is this different from Microsoft Purview or ChatGPT Enterprise settings?

Native tools are platform-specific, rely on manual labels/regex, and lack unified visibility. Secuvy adds cross-LLM classification, org-tuned unsupervised models, and global risk analytics (e.g., top risky prompts). Complements existing controls with a true LLM guardrail layer.

06Will this wreck my current LLM user experience?

No. You can match speed of rollout to accomodate the needs of your organization. Start monitor-only, then targeted user groups for critical data, then warn/redact for the rest.

Example: User pastes board deck → Secuvy replies, “Contains Board-Restricted info—here’s a safe summary instead.” Users learn fast and keep productivity high with real protection.

07Who is Secuvy AutoClassification Agent for?

Secuvy is built for teams that need LLM security and governance, not just generic DLP:

- CISOs / Security leaders who need guardrails for ChatGPT and internal LLMs

- Privacy, legal, and compliance teams that worry about regulated data landing in external models

- Data / AI platform teams rolling out corporate ChatGPT, RAG apps, and LLM APIs at scale

- Mid-market and enterprise orgs with lots of unstructured content (docs, decks, tickets, transcripts, wikis, etc.)

If your users are pasting sensitive docs into LLMs faster than you can write regex rules, you’re the target audience.

08Do we have to change our LLM stack to use Secuvy?

No.

Secuvy is LLM-agnostic and plugs in as a policy and classification layer, not a replacement for your LLMs.

- Keep using ChatGPT Enterprise, internal LLMs, RAG apps, and LLM APIs

- Add the Secuvy MCP Server to evaluate prompts and documents before they leave your environment

- Apply consistent policies across different tools instead of re-implementing rules N times

You keep your LLM stack; Secuvy standardizes classification, policy, and visibility across it.

09How does Secuvy classify data without months of setup?

Secuvy uses organization-specific, unsupervised classification:

- You point it at a sample of your real content (contracts, board decks, strategy docs, tickets, etc.)

- It learns how your org actually writes and structures information, including domain-specific terms

- You can optionally define or tweak labels and classes (e.g., Board-Restricted, CUI/ITAR, M&A, Customer PII)

Instead of spending weeks building regexes and keyword lists, you get a working classification model in hours, then refine it based on real results.

10Will this slow down ChatGPT Enterprise for my users?

Secuvy is designed to stay out of the way:

- Classification and policy checks are optimized to run in milliseconds to low seconds, even on large docs

- You can choose where to apply heavy analysis (e.g., only for certain apps, doc types, or sensitivity levels)

- For some workflows, Secuvy can pre-classify common repositories (like SharePoint or internal wikis) to reduce runtime work

The typical user experience:

“My Copilot/ChatGPT still feels fast, I just get warned (or blocked) when I try to send something truly sensitive.”

11What does moving from POC to production look like?

Typical path:

- Free trial / early access – connect a small corpus, test classifications, and simulate policies.

- Pilot – enable monitor-only + targeted blocking for one or two business units.

- Refinement – tune classes and finalize policies.

- Scale out – extend to more teams and connect to SIEM/DLP.

Because policies are defined once and reused, expanding coverage is mostly about turning on more surfaces, not re-writing rules.

12What do my admins actually manage day-to-day?

Admins typically:

- Review LLM usage and risk dashboards (top blocked prompts, risky teams, sensitive classes)

- Fine-tune policies and classes based on business feedback

- Handle exceptions (e.g., temporarily allow a highly trusted team or project)

- Export logs and reports for security, legal, and compliance reviews

You’re not constantly editing regexes—most work is policy decisions and tuning, not low-level rule maintenance.

13Can I start only with monitoring and turn on blocking later?

Yes, and that’s the recommended path.

- Start with monitor-only: classify everything, block nothing

- Share early insights with stakeholders: “Here’s what people are putting into ChatGPT Enterprise today.”

- Agree on “never allowed” categories (Board-Restricted, CUI/ITAR, M&A, etc.)

- Enable targeted blocking + warnings once everyone is aligned

This avoids surprise friction and builds trust that Secuvy is a safety net, not a productivity killer.

Secure Your AI Adoption Without Waiting for a Breach

Protect ChatGPT Enterprise, Copilot, and LLMs from sensitive data exposure while enabling safe innovation.